How We Proved Deepdesk Saves 15% in Costs (and How Hard That Was)

Deepdesk saves contact center agents about 20% characters typed. This is easy to measure. But contact centers care most about Average Handling Time (AHT). Measuring the effect Deepdesk has on AHT turned out to be harder than we thought. We found a way to do it.

Deepdesk and AI in chat channels

At Deepdesk we empower the agent with AI. We do so by giving real-time suggestions on what to type and where to link to. All the agent has to do is select the right answer instead of typing it. This not only makes the agent happy, our (safe) assumption is that not typing saves time and thus saves money.

We use Assisted Text to measure our performance. Assisted Text is the percentage of text that is assisted by Deepdesk, and thus not written by the agent. For a message it would look like this:

Let’s say that an agent typed 1000 characters during a conversation and our autocomplete suggested 200 of those characters, we can safely say that we saved 20% of the agent's time (and her employer's money). Sounds great, doesn’t it?

AHT as call center metric

Contact centers use metrics that track agent productivity. Next to Average Handling Time (AHT), there are Chats per Hour, or Closed Conversations per Hour, Mentions or Messages per Hour. But in essence, they all plot some productivity metric against the agents 'handling time'. We assume that Assisted Text, our metric, will reduce handling time, our customers’ metric. But does it? What if the agent just gets lazy, or keeps scrolling through options, thus only wasting time before saving it?

AHT and external factors

The most straightforward approach would be to plot the AHT and use of Deepdesk over a period of time and compare. But here is the thing: AHT can vary for multiple reasons: the company just released a new (problematic) product, new agents came in via a different company, there have been network issues, etc. Just the fact that AHT goes up or down, doesn’t mean that Deepdesk did a poor or great job. There are just too many external factors.

Different agents, different performance levels

What we need to know is whether Deepdesk helps agents work faster. Our first thought was to run an A/B test. As it turns out this was not an option since it would disturb the workflow of the agents too much.

So, what to do? Our second idea was to look at different agents and their performance. Some agents use Deepdesk solutions a lot, some hardly or not at all. If Deepdesk is indeed effective we should see a correlation: the more an agent uses Deepdesk, the faster the agent should be (the lower his/her AHT). But there is a catch here (bare with us, almost there). Maybe agents that use Deepdesk more are more skilled in general and thus faster anyway.

Do we have a third option? What if there is a way to show that the more an individual agent uses Deepdesk over time, the faster the agent will work? Actually, there is.

If an agent uses more or less Deepdesk, how does that impact his AHT?

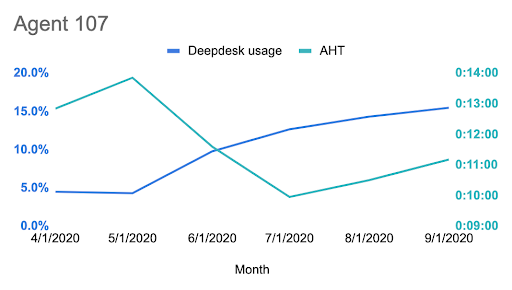

Let us take a real-life example of agent 107.

One agent can be a coincidence. We need to know what the trend is for many agents.

The trend: growing with Deepdesk, growing in speed

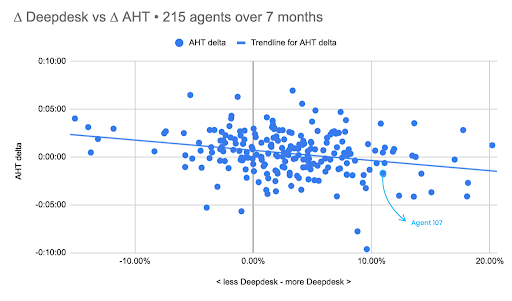

In the diagram below, each dot represents an agent, with a minimum of 50 messages/month over the period of 7 months. The above-mentioned agent 107 is circled in light blue.

On the horizontal axis, we see the change in Deepdesk usage in percentage (∆ Deepdesk)

On the vertical axis, we have the change in AHT in seconds (∆ AHT).

The trend is clearly visible: on average, when agents use Deepdesk more often, their AHT goes down. The effect is substantial: if an agent increases his/her Deepdesk usage with 5%-points, his/her AHT will go down by 32 seconds or 6% on average. It is safe to assume that an increase of 15% of Deepdesk use (not uncommon for our customers when they start working with us) will lead to an 18% reduction in AHT in only a couple of months. Which naturally makes our customers (and us) very happy as this is precisely what we are aiming for.

For more on this story, learn how VodafoneZiggo brought down handling time with 15% within a couple of months

Please reach out at hello@deepdesk.com or call +31202441750 if you have any questions or if you would like to lower your contact center cost as well.

Header Image by Glenn Carstens-Peters on Unsplash

Happy agents, better conversations

Increase NPS, without the cost: Deepdesk's AI technology helps customer support agents to have more fulfilling customer conversations.